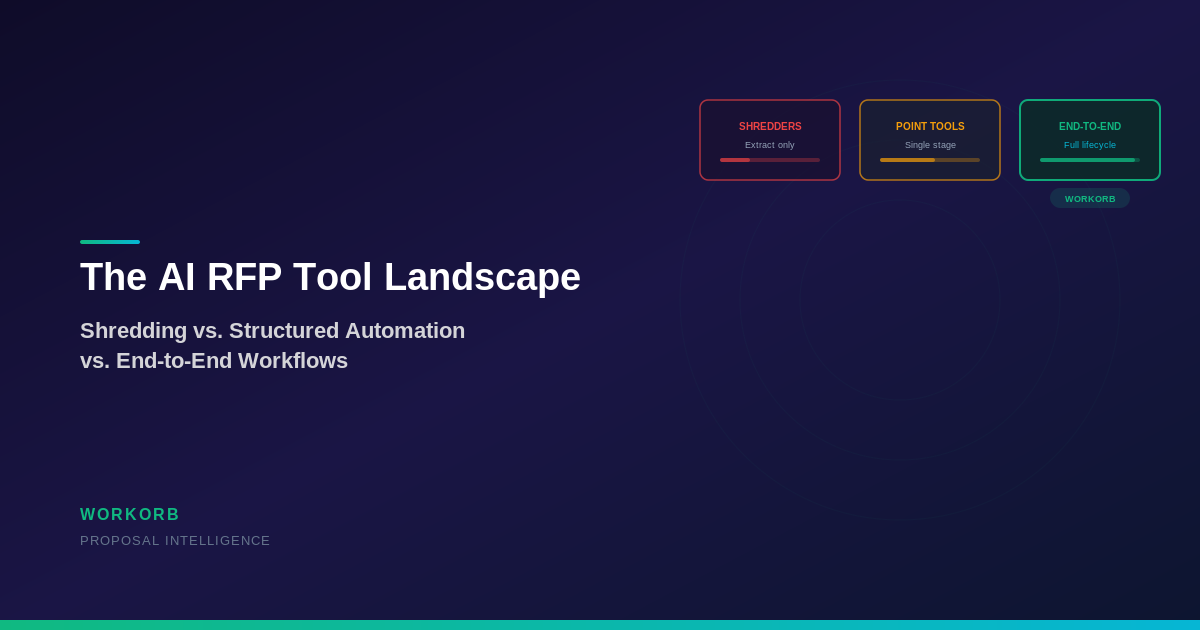

The AI RFP market has exploded with solutions, and every vendor claims to 'automate' the proposal process. But the word automation covers a wide spectrum of capabilities, and understanding where a tool sits on that spectrum is critical for making the right purchasing decision.

Category 1: Document shredders. These tools take a large RFP document and break it into structured components—extracting requirements, deadlines, evaluation criteria, and compliance obligations. They're essentially sophisticated document analysis platforms. They answer the question 'What does this RFP ask for?' but leave the actual response generation, compliance tracking, and submission to your team. Examples include tools that turn 'thousands of pages into a single source of truth' or deliver 'rapid extraction into structured insight.'

Category 2: Point automation tools. These handle specific stages of the RFP lifecycle well—automated drafting, compliance matrix generation, or scoring—but don't connect the stages. Your team still manually transfers outputs between tools, reformats data, and coordinates handoffs. They're faster than fully manual processes but create new bottlenecks at the integration points.

Category 3: End-to-end workflow platforms. These connect the entire lifecycle: intake, extraction, drafting, compliance, scoring, assembly, and submission. Data flows automatically between stages. Compliance is validated continuously, not checked once at the end. The proposal assembles from structured components rather than being manually compiled. Workorb sits firmly in this category.

Not all AI RFP platforms are built the same. Here's how to evaluate the current landscape and understand what separates document analysis from true proposal automation.

Evaluating AI RFP tools requires looking beyond marketing language to actual workflow capabilities. Here's a practical framework.

Data continuity. Does information extracted during intake flow automatically into drafting and compliance? Or does your team re-enter data between stages? Data continuity is the single biggest differentiator between tools that save time and tools that just shift where time is spent.

Output auditability. Can you trace every claim in your proposal back to a specific RFP requirement? Can evaluators follow a clear compliance matrix from their criteria to your response sections? Tools that produce auditable, traceable outputs give evaluators confidence. Tools that produce unstructured narratives leave evaluators guessing.

Collaboration architecture. How does the tool handle multi-person, multi-team bid efforts? Can subject matter experts contribute to specific sections without disrupting others? Can managers see real-time compliance status across all sections? RFPs are team efforts, and the tool needs to support that reality rather than treating proposal development as a single-author activity.

Governance and control. AI-generated content requires oversight. Does the tool support review workflows, approval gates, and version control? Can your team define drafting rules and quality standards that the AI follows? The best tools augment human judgment rather than replacing it—generating structured first drafts that experts refine rather than black-box outputs that nobody can verify.

The RFP tool market is consolidating around end-to-end platforms for a simple reason: buyers have learned that point solutions create integration headaches. A team using one tool for extraction, another for drafting, and a third for compliance tracking spends significant time moving data between systems, reconciling formats, and maintaining consistency across disconnected tools.

End-to-end platforms like Workorb eliminate this integration tax. When extraction feeds directly into drafting, and drafting feeds directly into compliance validation, and compliance validation feeds directly into proposal assembly, the entire cycle compresses. Teams spend their time on strategic decisions—how to position against competitors, which case studies best demonstrate relevance, where to invest extra effort on high-value sections—rather than on data transfer, formatting, and compliance checking.

The next evolution is already underway: platforms that not only automate the current RFP but learn from outcomes to improve the next one. Win/loss analysis feeds back into content libraries, template refinement, and strategic positioning. Each proposal makes the platform smarter. Each outcome refines the system's understanding of what evaluators value. This is where Workorb is headed—not just automating RFP response, but building an institutional advantage that compounds with every pursuit.